Uncategorized

The Internet of Things has been a focus of ours over the past few years and an area we’ve developed a lot of expertise in. Our current offering consists of a fully-fledged end-to-end IoT platform that empowers both device makers and users via numerous capabilities.

On one hand, product makers need user and device management, device metrics, and product analytics. On the other hand, device owners enjoy varied control over their devices via a dedicated mobile app and web interface, can interact with their devices via virtual assistants like Amazon Alexa or Google Home, set device alerts, enable geolocation features, and more.

With an ever-growing list of features and a business need for modularity, we’ve decided early on that a service-oriented approach was best suited for the problem at hand.

Developing a platform as a collection of microservices allows for modularity and smarter horizontal scaling of individual services but increases the complexity of deployment and the management of production environments. Fortunately, modern tools like Kubernetes greatly simplify the orchestration of services in production. This article is an overview of the architecture behind our IoT platform and our experience using Kubernetes in production.

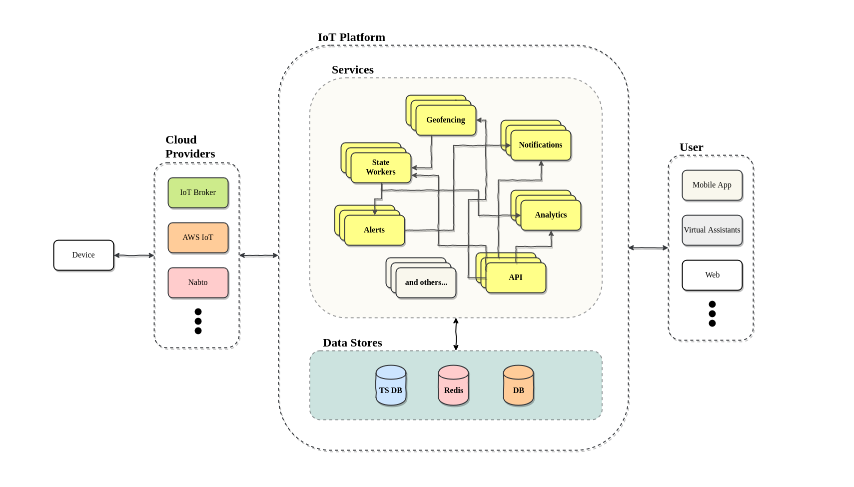

A service-based IoT platform

The above diagram gives a broad overview of the IoT system we run in production. It’s a lot to take in but we shall keep our focus on what these subsystems do and not how they do it, on how data flows through these services, and on how everything comes together to provide the full IoT experience that is delivered to customers. We’ll start from the outer sides of the diagram and work our way into the core, the IoT platform.

On the right-hand side, we have the end-user, the device owner. Users communicate with their devices via our cloud platform and can interact with them via the different interfaces that we support (app, web, virtual assistants). On the left-hand side, we have the actual IoT device – it could be a smart camera, light bulb, thermostat, or a complete IoT gateway. The device communicates with our platform via the device maker’s cloud provider of choice.

Device data broker (IoT cloud provider)

We currently support a number of cloud providers, such as AWS IoT and Nabto, but our platform is flexible enough that we can easily integrate with any third party of choice. We also offer our own data broker, represented as the “IoT Broker” in the diagram. In short, what a cloud provider does, as far as we are concerned, communicate with physical devices, report their state, read data, and allow the user to publish changes back to those devices.

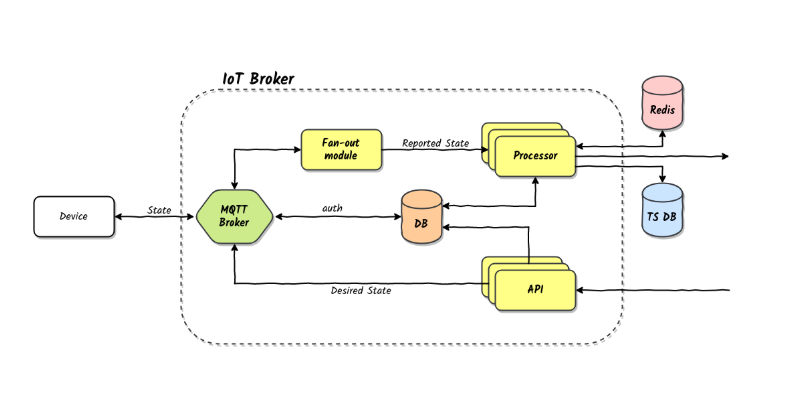

The diagram above pictures our own implementation of a cloud provider. It’s a simple but flexible implementation that is serving us well. The changes desired by the user reach the broker via HTTP API calls and are forwarded to the device via the MQTT broker. Data reported by the device comes back from the device also via the MQTT broker and is pushed to our IoT platform via a message queue. Device data comes through much more often than user requests, therefore we’ve elected to process these reported changes asynchronously and not use HTTP for this part of the communication. Performance is significantly higher due to this choice.

Devices communicate with the cloud via the MQTT protocol. The connection is encrypted and the MQTT broker authenticates each device using a credentials database that we control via the broker’s HTTP API. Security has often been a hot topic in the IoT industry but we’ve always been keen on following best practices regarding the security and privacy of data.

MQTT scaling

Data reported by each device comes in via device-specific MQTT topics. The MQTT broker then broadcasts these messages to any subscribed client. In order to process messages in parallel, you cannot simply have multiple instances of the same service subscribe to the same topics. They would all end up doing the same work multiple times. A scaling strategy must be put in place to enable parallelism.

Since we already use queues to pass messages asynchronously between services we elected to simply add a small fan-out service in between the MQTT broker and the data processing services. This service reads all messages and puts them in a work queue. Multiple horizontally scaled data processing units are then able to subscribe to the same queue and work in parallel. We’ve stress-tested this model in practice and had good results, scaling up to 50,000 messages per second on modest AWS machines.

A different strategy that we considered is the so-called sharding strategy. Each data processor can be configured to subscribe to only a subset of MQTT topics. This has the potential to scale to an infinite number of messages but requires careful configuration and coordination between services. We’ve elected to keep things simple for now with good practical results.

A platform as a collection of services

The IoT platform in the middle is what makes everything work. All the features described in the beginning reside here.

Rather than build a monolithic platform that would’ve very likely grown into an unmaintainable and overly complicated mess, we’ve elected to separate concerns as much as possible so as to make the system as modular as possible.

We are able to easily turn features on and off by simply removing services in production. Not every device vendor needs every single feature and we are able to quickly accommodate everyone’s needs. We are also able to quickly expand our system with new services depending on the vendor’s requirements. Almost all vendors have asked for customized services on our side and thanks to this architecture we’ve been able to easily integrate new code into the system.

As a quick overview, here’s what each service in the above diagrams handles:

- geofencing: receives geolocation events from the user’s phones and updates the state of the device accordingly, for example turning off the heat when the user leaves home

- notifications: handle sending email, text messages, and push notifications

- analytics: handles data and metrics collected from devices

- API: allows 3rd party services to integrate with the platform

- alerts: check if alert conditions are met, trigger notifications

- state workers: process changes in device state received from the cloud provider

There are a bunch of other services that handle various internal tasks, as well as periodic jobs that run as separate services, but the above-mentioned are most closely related to user-accessible features.

The data layer includes InfluxDB, Redis, and PostgreSQL. We store time-series data in InfluxDB, the most important being device-reported data. This allows us to generate reports, show charts, and compute various other metrics. Redis is used mainly as a cache and PostgreSQL is used for all other data storage needs.

Containers, containers, containers

Containers are at the heart of almost any modern service-oriented architecture. Combined with container orchestration tools like Kubernetes, deploying and maintaining service containers has become a pleasant experience, free of those dreaded midnight service calls.

Each of the pictured services is deployed as a docker container using Kubernetes. We build images containing only the code and configuration required to run each service. Kubernetes then launches containers for each of these services, handles the passing of environment variables and secrets to them, and continuously monitors them for any faults in either the application logic or the underlying physical infrastructure. Faulty machines or containers having temporary issues no longer require any manual intervention on our side.

Service communication

All services deployed across our system need to communicate in some form or another with either the outside world or among themselves. For this purpose, we chose two protocols: HTTP and AMQP. All outside world requests come in via HTTP calls to our REST APIs. Inner service communication however happens via RabbitMQ.

Most of our services are asynchronous in nature and are well served by working queues. Should one service need to send an email to a user, there’s no point in waiting for the notifications service to confirm the email has been sent. The notification service has the responsibility of eventually sending that email. While this is happening, the calling service can go back to doing what it was designed to do.

Using queues simplifies the code, as there are fewer error conditions to be handled when requested services are not available. The system as a whole benefits because individual services do not have to worry about work not being done. Should a service die before it finishes work, it will not acknowledge the message and RabbitMQ will simply put the message back in the work queue for another instance to pick it up. Kubernetes is also free to kill and reassign services across machines without any fear of losing data.

Scaling

One area where our service-oriented architecture has shined in practice has been scaling. We are able with the click of a few buttons to go from two instances of a service to as many as we like. If the system is under heavy load, Kubernetes can also scale services automatically. Should the horizontal scaling of service demand more resources than available, the Kubernetes auto scaler can also provide extra machines for us. We’ve deployed our services to both AWS and GCE and had good results with the cloud integration Kubernetes provides out of the box.

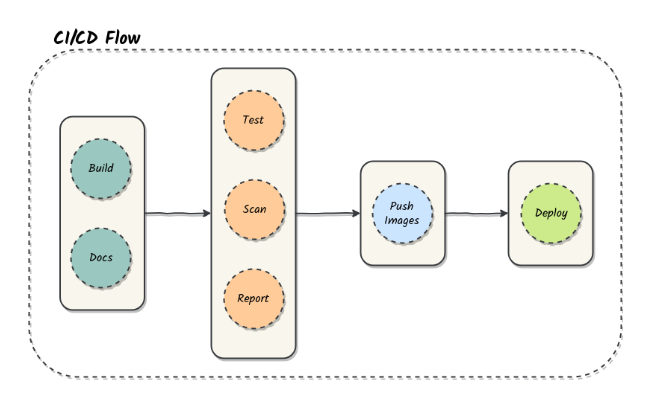

Continuous delivery

In spite of the complex architecture at hand, the deployment experience has become a very pleasant one, thanks to Kubernetes and Jenkins. Developers don’t need to concern themselves with deployment details and all they have to do to get their code running in a live environment is push their code and documentation to the repository.

The CI/CD system then kicks in and goes through the appropriate motions. Docker images are built, documentation is automatically generated and uploaded. Tests run on every built image and the deployment process stops should any of them fail. Code quality tools (code formatters/linters, SonarQube, and others) scan the code and report any issues ranging from coding style to possible security issues.

If all is well and all steps are complete successfully the images are automatically pushed and new Kubernetes deployments using the most recent images are applied to the appropriate cluster. We are able to go from code pushed to code in production in less than 5 minutes and do so with zero downtime. Kubernetes handles the blue-green deployment of our services and our message queues ensure no work requests get lost.

Lessons learned

Running a distributed system in production is never an easy task and not everything runs smoothly all the time. Kubernetes in particular, although very powerful, is also quite a complex tool, with a fairly steep learning curve. A few things we’ve learned:

Invest in a good logging and monitoring setup

Containers should be distributed across machines according to their resource requirements and such that resource usage is maximized across the entire cluster. The Kubernetes scheduler is able to meet these requirements most of the time but it requires some help in making good scheduling decisions.

Both resource capacity requests and usage limits can be configured for each Kubernetes pod manually. We see this as a must. We had issues in the beginning with services not having these resource values defined whereby they would end up overcrowding certain physical machines and in the process bringing them down entirely. Continuous monitoring of resource usage and the fine-tuning of configuration have greatly improved the overall stability and performance of the entire distributed system.

Performance critical data stores may still be best kept outside containers

Containers are generally considered stateless units of computation. Not handling any state keeps them nimble and easy to destroy and recreate at will. While this model is remarkable for most services, it is not a particularly great match for data stores and it may be even worse when it comes to scaled data stores.

It’s true, of course, that state persistence can always be enabled in a container by mounting an external volume. It’s been our experience, however, that this process is not always smooth. Then there’s the issue of performance. Network mounted volumes at the major cloud providers don’t necessarily offer impressive performance (unless you are willing to spend a lot).

On AWS we sometimes had the issue of volumes not mounting smoothly, taking up to a few minutes to get attached to the proper machine and the container residing on it. This is simply unacceptable for a critical system component. If the datastore is not replicated/scaled then you’re without a database for quite a while for no good reason. Replicating and scaling a database using container orchestration isn’t something exactly straightforward and can be a hassle to properly manage in production.

For the sake of simplicity and stability, we’ve elected to keep our data stores on larger separate machines using dedicated SSD drives. We might look into moving data stores to the cluster in the future but for now, we see little advantage in doing it. We are using containerized data stores for some of our temporary testing environments as it makes the whole process of bringing entire environments up and down very simple but we still have ways to go before we are production-ready.